Harbor HA部署-使用Ceph RADOS后端,

目录

1. 前言

2. 配置Ceph radosgw用户

3. 部署HA的MariaDB集群

4. 部署HA的Redis集群

5. 部署Harbor集群

参考资料

1. 前言

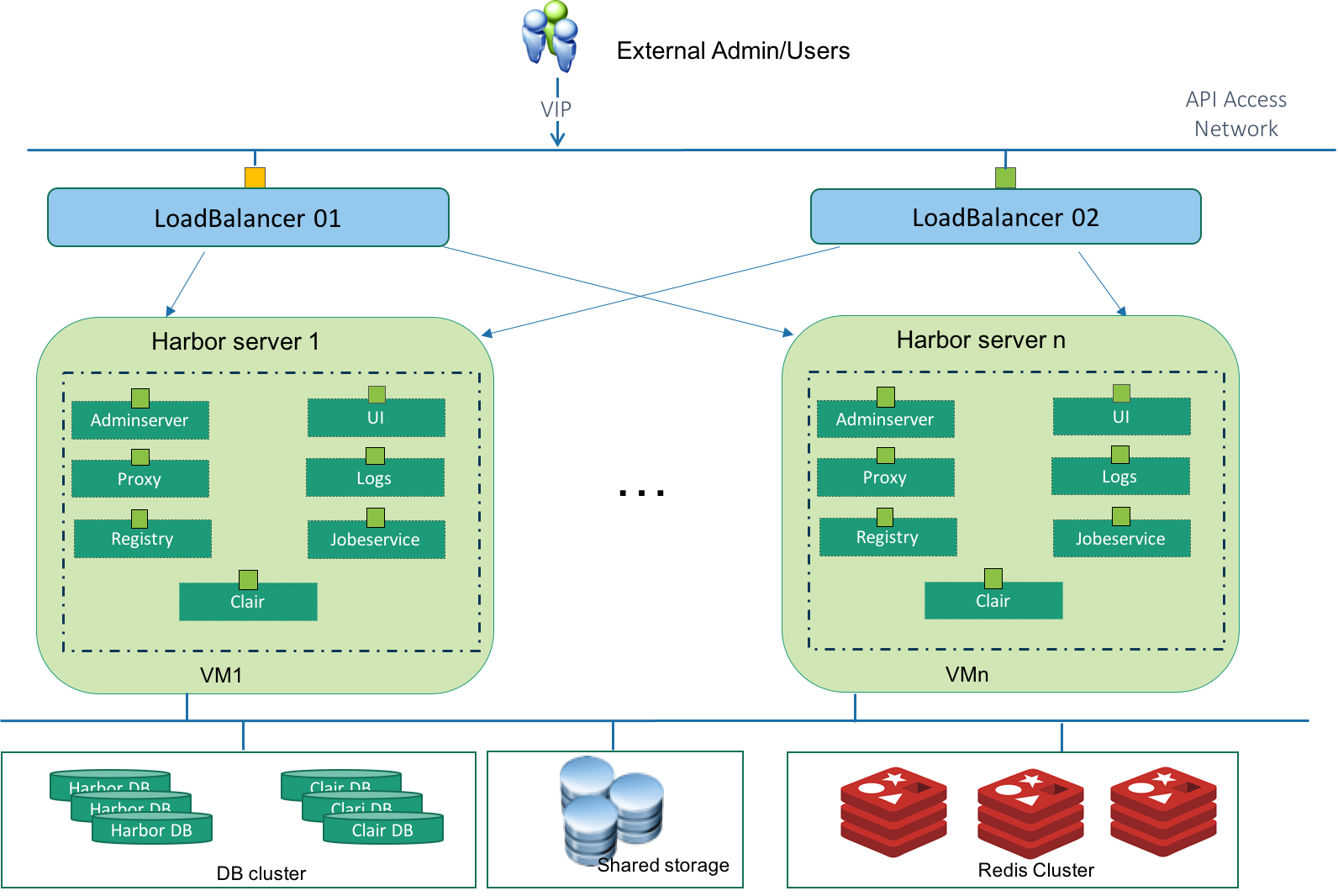

Harbor 1.4.0版本开始提供了HA部署方式,和非HA的主要区别就是把有状态的服务分离出来,使用外部集群,而不是运行在本地的容器上。而无状态的服务则可以部署在多个节点上,通过配置上层Load Balancer构成HA。

这些有状态的服务包括:

- Harbor database(MariaDB)

- Clair database(PostgresSQL)

- Notary database(MariaDB)

- Redis

我们的Harbor没有使用notary和clair,所以只需要预先准备高可用的MariaDB和Redis集群。多个Harbor节点配置使用相同的MariaDB和Redis地址,就构成了HA集群。

另外多个Registry需要使用共享存储,可选的有Swift、NFS、S3、azure、GCS、Ceph和OSS。我们选择使用Ceph。

docker-registry在2.4.0版本之后移除了rados storage driver,推荐使用Swift API gateway替代,因为Ceph在rados之上提供了兼容Swift和S3的接口。Harbor是在docker-registry的基础之上做的扩展,我们用的Harbor 1.5.1所使用的registry是2.6.2版本,因此无法配置rados storage,只能使用Swift driver或者S3 driver,我们选择Swift driver。

2. 配置Ceph radosgw用户

在Ceph Radosgw上为Harbor Registry创建一个user和subuser

# radosgw-admin user create --uid=registry --display-name="registry" # radosgw-admin subuser create --uid=registry --subuser=registry:swift --access=full

其中user用于访问S3接口,subuser用于访问Swift接口。我们将使用radosgw的Swift接口,记录下subuser的secret_key。

3. 部署HA的MariaDB集群

1) 安装配置MariaDB

# yum install MariaDB-server MariaDB-client # cat /etc/my.cnf.d/server.cnf [mariadb-10.1] datadir=/data/mysql socket=/data/mysql/mysql.sock log-error=/var/log/mysql/mysql.log pid-file=/data/mysql/mysql.pid max_connections=10000 binlog_format=ROW expire_logs_days = 30 character-set-server = utf8 collation-server = utf8_general_ci default-storage-engine=innodb init-connect = 'SET NAMES utf8' innodb_file_per_table innodb_autoinc_lock_mode=2 bind-address=0.0.0.0 wsrep_on=ON wsrep_provider=/usr/lib64/galera/libgalera_smm.so wsrep_cluster_address='gcomm://10.212.29.38,10.212.29.39,10.212.29.40?pc.wait_prim=no' wsrep_cluster_name='Harbor' wsrep_node_address=10.212.29.38 wsrep_node_name='registry01-prod-rg3-b28' wsrep_sst_method=rsync wsrep_sst_auth=galera:galera_sync wsrep_slave_threads=1 innodb_flush_log_at_trx_commit=0 default-storage-engine=INNODB innodb_large_prefix=on innodb_file_format=BARRACUDA slow_query_log=1 slow_query_log_file=/var/log/mysql/mysql-slow.log log_queries_not_using_indexes=1 long_query_time=3

其他两个节点相同配置,注意修改wsrep_node_address和wsrep_node_name。

2) 启动MariaDB集群

在一个节点上初始化:

# mysql_install_db --defaults-file=/etc/my.cnf.d/server.cnf --user=mysql # mysqld_safe --defaults-file=/etc/my.cnf.d/server.cnf --user=mysql --wsrep-new-cluster & # 启动MariaDB服务并建立集群 # mysql_secure_installation # 设置root密码和初始化 # mysql MariaDB [(none)]> grant all privilidges on *.* to 'galera'@'%' identified by 'galera_sync';

在其他两个节点上启动MariaDB服务:

# systemctl start mariadb

3) 验证集群状态

MariaDB [(none)]> SHOW STATUS LIKE 'wsrep_cluster_size'; +--------------------+-------+ | Variable_name | Value | +--------------------+-------+ | wsrep_cluster_size | 3 | +--------------------+-------+ MariaDB [(none)]> show global status like 'ws%'; +------------------------------+-------------------------------------------------------+ | Variable_name | Value | +------------------------------+-------------------------------------------------------+ | wsrep_apply_oooe | 0.000000 | | wsrep_apply_oool | 0.000000 | | wsrep_apply_window | 1.000000 | | wsrep_causal_reads | 0 | | wsrep_cert_deps_distance | 20.630228 | | wsrep_cert_index_size | 94 | | wsrep_cert_interval | 0.003802 | | wsrep_cluster_conf_id | 6 | | wsrep_cluster_size | 3 | | wsrep_cluster_state_uuid | f785b691-7513-11e8-bfdf-c2caa6c57ac0 | | wsrep_cluster_status | Primary | | wsrep_commit_oooe | 0.000000 | | wsrep_commit_oool | 0.000000 | | wsrep_commit_window | 1.000000 | | wsrep_connected | ON | | wsrep_desync_count | 0 | | wsrep_evs_delayed | | | wsrep_evs_evict_list | | | wsrep_evs_repl_latency | 0/0/0/0/0 | | wsrep_evs_state | OPERATIONAL | | wsrep_flow_control_paused | 0.000091 | | wsrep_flow_control_paused_ns | 8931145224 | | wsrep_flow_control_recv | 19 | | wsrep_flow_control_sent | 19 | | wsrep_gcomm_uuid | 39a7ce90-7522-11e8-bbbc-feb5b5a38145 | | wsrep_incoming_addresses | 10.212.29.38:3306,10.212.29.40:3306,10.212.29.39:3306 | | wsrep_last_committed | 1128 | | wsrep_local_bf_aborts | 0 | | wsrep_local_cached_downto | 77 | | wsrep_local_cert_failures | 0 | | wsrep_local_commits | 254 | | wsrep_local_index | 0 | | wsrep_local_recv_queue | 0 | | wsrep_local_recv_queue_avg | 2.058603 | | wsrep_local_recv_queue_max | 29 | | wsrep_local_recv_queue_min | 0 | | wsrep_local_replays | 0 | | wsrep_local_send_queue | 0 | | wsrep_local_send_queue_avg | 0.000000 | | wsrep_local_send_queue_max | 1 | | wsrep_local_send_queue_min | 0 | | wsrep_local_state | 4 | | wsrep_local_state_comment | Synced | | wsrep_local_state_uuid | f785b691-7513-11e8-bfdf-c2caa6c57ac0 | | wsrep_protocol_version | 7 | | wsrep_provider_name | Galera | | wsrep_provider_vendor | Codership Oy <info@codership.com> | | wsrep_provider_version | 25.3.19(r3667) | | wsrep_ready | ON | | wsrep_received | 802 | | wsrep_received_bytes | 340485 | | wsrep_repl_data_bytes | 62325 | | wsrep_repl_keys | 1074 | | wsrep_repl_keys_bytes | 14986 | | wsrep_repl_other_bytes | 0 | | wsrep_replicated | 278 | | wsrep_replicated_bytes | 95103 | | wsrep_thread_count | 2 | +------------------------------+-------------------------------------------------------+ 58 rows in set (0.00 sec)View Code

wsrep_cluster_size为3,表示集群有三个节点。

wsrep_cluster_status为Primary,表示节点为主节点,正常读写。

wsrep_ready为ON,表示集群正常运行。

4. 部署HA的Redis集群

Harbor 1.5.0和1.5.1版本有bug,不支持使用redis集群,只能用单个redis,详情查看这个issue。除了Issue中提到的Job Service,UI组件也使用redis保存token数据,同样不支持redis集群,会登录时会报错找不到token:

Jun 22 17:26:19 172.18.0.1 ui[8651]: 2018/06/22 09:26:19 #033[1;31m[E] [server.go:2619] MOVED 10216 10.212.29.39:7001#033[0m Jun 22 17:26:19 172.18.0.1 ui[8651]: 2018/06/22 09:26:19 #033[1;44m[D] [server.go:2619] | 10.212.29.40|#033[41m 503 #033[0m| 636.771µs| nomatch|#033[44m GET #033[0m /service/token#033[0m

官方计划在Sprint 35解决这个问题。在此之前,可以不用下面的步骤部署redis集群,而是运行一个单独的redis。

1) 编译redis

# yum install gcc-c++ tcl ruby rubygems # wget http://download.redis.io/releases/redis-4.0.10.tar.gz # tar xzvf redis-4.0.10.tar.gz # cd redis-4.0.10 # make # make PREFIX=/usr/local/redis/ install

2) 准备redis集群环境

# mkdir -p /usr/local/redis-cluster/{7001,7002} # find /usr/local/redis-cluster -mindepth 1 -maxdepth 1 -type d | xargs -n 1 cp /usr/local/redis/bin/* # find /usr/local/redis-cluster -mindepth 1 -maxdepth 1 -type d | xargs -n 1 cp redis.conf # cp src/redis-trib.rb /usr/local/redis-cluster/

进入/usr/local/redis-cluster/{7001,7002}目录,修改redis.conf中的以下四个参数,其中port与目录名对应:

bind 0.0.0.0 port 7001 daemonize yes cluster-enabled yes

3) 安装依赖

redis-trib.rb使用了redis gem,redis-4.0.1.gem依赖ruby2.2.2以上的版本,而ruby22又依赖openssl-libs-1.0.2,所以需要按以下顺序安装:

# wget http://mirror.centos.org/centos/7/os/x86_64/Packages/openssl-1.0.2k-12.el7.x86_64.rpm # wget http://mirror.centos.org/centos/7/os/x86_64/Packages/openssl-libs-1.0.2k-12.el7.x86_64.rpm # yum localinstall openssl-1.0.2k-12.el7.x86_64.rpm openssl-libs-1.0.2k-12.el7.x86_64.rpm # yum install centos-release-scl-rh # yum install rh-ruby25 # scl enable rh-ruby25 bash # gem install redis

4) 运行redis-server

在所有节点上执行:

# cd /usr/local/redis-cluster/7001/; ./redis-server redis.conf # cd /usr/local/redis-cluster/7002/; ./redis-server redis.conf

5) 创建集群

# ./redis-trib.rb create --replicas 1 10.212.29.38:7001 10.212.29.39:7001 10.212.29.40:7001 10.212.29.38:7002 10.212.29.39:7002 10.212.29.40:7002

5. 部署Harbor集群

以下操作在所有Harbor节点上执行。

1) 下载Harbor installer

可以从Harbor Releases页面查看release信息,Harbor installer分为offline installer和online installer,区别是offline installer中包含了部署所用到的docker image,而online installer需要在部署过程中从docker hub下载。我们选择使用1.5.1版本的offline installer:

# wget https://storage.googleapis.com/harbor-releases/release-1.5.0/harbor-offline-installer-v1.5.1.tgz # tar xvf harbor-offline-installer-v1.5.1.tgz

2) 编辑harbor.cfg

harbor.cfg文件包含了一些会在Harbor组件中使用的参数,关键的有域名、数据库、Redis、后端存储和LDAP。

域名,需要能解析到节点IP或者VIP:

hostname = registry.example.com

数据库:

db_host = 10.212.29.38 db_password = password db_port = 3306 db_user = registry

Redis:

redis_url = 10.212.29.38:6379

后端存储:

registry_storage_provider_name = swift registry_storage_provider_config = authurl: http://10.32.3.70:7480/auth/v1, username: registry:swift, password: xFTlZ1Lc5tgH78E7SSHYDmRuUyDK08BariFuR6jO, container: registry.example.com

LDAP(不使用ldap认证则不需要配置这些参数):

auth_mode = ldap_auth ldap_url = ldap://10.2.208.11:389 ldap_searchdn = CN=admin,OU=Infra, OU=Tech,DC= example,DC=com ldap_search_pwd = password ldap_basedn = OU=Tech,DC=example,DC=com ldap_filter = (objectClass=person) ldap_uid = sAMAccountName ldap_scope = 3 ldap_timeout = 5 ldap_verify_cert = false ldap_group_basedn = OU=Tech,DC=example,DC=com ldap_group_filter = (objectclass=group) ldap_group_gid = sAMAccountName ldap_group_scope = 3

3) 初始化数据库

MariaDB [(none)]> source harbor/ha/registry.sql MariaDB [(none)]> grant all privileges on registry.* to 'registry'@'%' identified by 'password';

4) 部署Harbor

# cd harbor # ./install.sh --ha

部署分为四步,主要就是根据harbor.cfg生成一系列容器的yml文件,然后使用docker-compose拉起。因为使用离线部署,无需从外网下载镜像,部署过程非常快,只需要几分钟。部署完成后,使用docker-compose查看容器状态:

# docker-compose ps Name Command State Ports ------------------------------------------------------------------------------------------------------------------------------------- harbor-adminserver /harbor/start.sh Up (healthy) harbor-db /usr/local/bin/docker-entr ... Up (healthy) 3306/tcp harbor-jobservice /harbor/start.sh Up harbor-log /bin/sh -c /usr/local/bin/ ... Up (healthy) 127.0.0.1:1514->10514/tcp harbor-ui /harbor/start.sh Up (healthy) nginx nginx -g daemon off; Up (healthy) 0.0.0.0:443->443/tcp, 0.0.0.0:4443->4443/tcp, 0.0.0.0:80->80/tcp redis docker-entrypoint.sh redis ... Up 6379/tcp registry /entrypoint.sh serve /etc/ ... Up (healthy) 5000/tcp

看到所有组件的容器都是up状态,此时用浏览器访问节点IP、VIP或者域名,就能看到Harbor的界面了。

使用自己熟悉的Load Balancer,将MariaDB、Redis、Ceph Radosgw和Harbor http都配置上VIP,同时将harbor.cfg里指向的地址都改成VIP地址,就可以实现完全的HA模式。

参考资料

Harbor High Availability Guide

CentOS 7.2部署MariaDB Galera Cluster(10.1.21-MariaDB) 3主集群环境

redis单机及其集群的搭建

Registry Configuration Reference #storage